A functional society is built on a store of publicly accessible information, its shared history, and that evolves over time.

But who is assigned write-privileges to this public ledger and how can they be trusted?

A society that cannot guard against individuals freely rewriting, rejiggering to their advantage, its history, is doomed to mob rule. Each group in momentary power or privilege will get the typewriter to write a new history and the eraser to obliterate what was before.

This is not to say that societies cannot function if they rely on a shared history consisting of fake news. Indeed, they may even flourish if fake news is a tether, nation-founding myths designed to promote social cohesion.

The key questions at this moment are

Where is the tractable problem

What kind of small experiments might help us to address it?

Yet neither resolves the vast and potentially intractable problem of information-stream fragmentation along ideological lines.

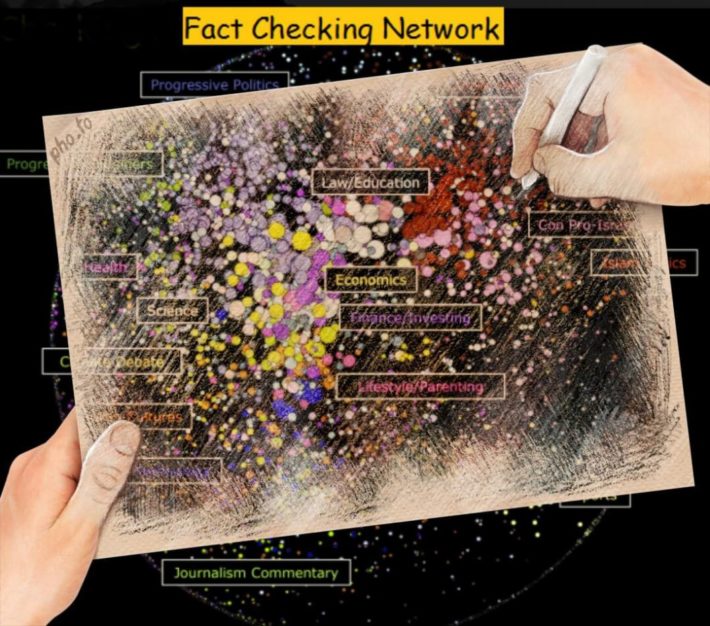

This image (if somehow you can’t see it, lemme tell you, its good. You missed out) shows an “attentive cluster” analysis of blogs that are interested in the topic of fact-checking. Blogs that link to similar sites are grouped together in bubbles—or closer to each other–and the whole group of bubbles is organized on a left-to-right political dimension.

The image shows that although both profess to care about “facts,” progressive and conservative blogs tend to link to radically different things, each constructs a different reality.

The current, unsustainable, band aid is to coerce self censorship and shut down self-made user content, yet this, as shitty a thing as it is, isn’t a fix to breaking into hermetically sealed misinformation environments.

So, the actual big problem to solve is the human one. People do not really want the truth. They sometimes double down on wrong beliefs after being corrected, and become more wrong and harder to sway as they become more knowledgeable about a subject, or more highly educated.

Ultimately, the psychology research says that you move people not so much through factual rebuttals as through emotional appeals that resonate with their core values. These, in turn, shape how people receive facts—how they weave them into a narrative that imparts a sense of identity, belonging, and security.

These technological misadventures into combating misinformation are behind (election?) schedule in two areas:

1. Speed. You have to be right there in realtime correcting falsehoods, before they get loose into the information ecosystem—before the victim is shot. I suspect that the best we can hope for is a stalemate in the misinformation arms race.

2. Selective Exposure. You’ve got to find ways to break into networks where you aren’t really wanted.

Is Blockchain a solution? Perhaps. Blockchain as a distributed history where the database is updated and kept secure through some communal consensus algorithm. But, whether it’s a solution or tech cultism, will take generations to know. And…

…that answer depends on what parts of our shared history we can expect to manage through a computer-based blockchain (and on the details of the consensus protocol).

The Bitcoin blockchain appears to have solved the fake news problem for its particular application (essentially, debiting/crediting money accounts–though broader applications appear possible). Might the same principles be used to manage the database at Wikipedia? Ultimately, the fundamental problem with fake news is not that we don’t have the technology to prevent it. The problem seems more deeply rooted in the human trait of preferring truthiness over truth, especially if truthiness salves where the truth might hurt.

The end?

A society that cannot guard against individuals freely rewriting, rejiggering to their advantage, its history, is doomed to mob rule. Each group in momentary power or privilege will get the typewriter to write a new history and the eraser to obliterate what was before.

This is not to say that societies cannot function if they rely on a shared history consisting of fake news. Indeed, they may even flourish if fake news is a tether, nation-founding myths designed to promote social cohesion.

The key questions at this moment are

Where is the tractable problem

What kind of small experiments might help us to address it?

Yet neither resolves the vast and potentially intractable problem of information-stream fragmentation along ideological lines.

This image (if somehow you can’t see it, lemme tell you, its good. You missed out) shows an “attentive cluster” analysis of blogs that are interested in the topic of fact-checking. Blogs that link to similar sites are grouped together in bubbles—or closer to each other–and the whole group of bubbles is organized on a left-to-right political dimension.

The image shows that although both profess to care about “facts,” progressive and conservative blogs tend to link to radically different things, each constructs a different reality.

The current, unsustainable, band aid is to coerce self censorship and shut down self-made user content, yet this, as shitty a thing as it is, isn’t a fix to breaking into hermetically sealed misinformation environments.

So, the actual big problem to solve is the human one. People do not really want the truth. They sometimes double down on wrong beliefs after being corrected, and become more wrong and harder to sway as they become more knowledgeable about a subject, or more highly educated.

Ultimately, the psychology research says that you move people not so much through factual rebuttals as through emotional appeals that resonate with their core values. These, in turn, shape how people receive facts—how they weave them into a narrative that imparts a sense of identity, belonging, and security.

These technological misadventures into combating misinformation are behind (election?) schedule in two areas:

1. Speed. You have to be right there in realtime correcting falsehoods, before they get loose into the information ecosystem—before the victim is shot. I suspect that the best we can hope for is a stalemate in the misinformation arms race.

2. Selective Exposure. You’ve got to find ways to break into networks where you aren’t really wanted.

Is Blockchain a solution? Perhaps. Blockchain as a distributed history where the database is updated and kept secure through some communal consensus algorithm. But, whether it’s a solution or tech cultism, will take generations to know. And…

…that answer depends on what parts of our shared history we can expect to manage through a computer-based blockchain (and on the details of the consensus protocol).

The Bitcoin blockchain appears to have solved the fake news problem for its particular application (essentially, debiting/crediting money accounts–though broader applications appear possible). Might the same principles be used to manage the database at Wikipedia? Ultimately, the fundamental problem with fake news is not that we don’t have the technology to prevent it. The problem seems more deeply rooted in the human trait of preferring truthiness over truth, especially if truthiness salves where the truth might hurt.

The end?